A self-driving car from Uber Technologies Inc. hit and killed a woman in Tempe, Arizona, on Sunday evening, what is likely the first pedestrian fatality involving a driverless vehicle.

Samsung's Galaxy S25 is set to elevate Google AI integration, extending to hardware depths.

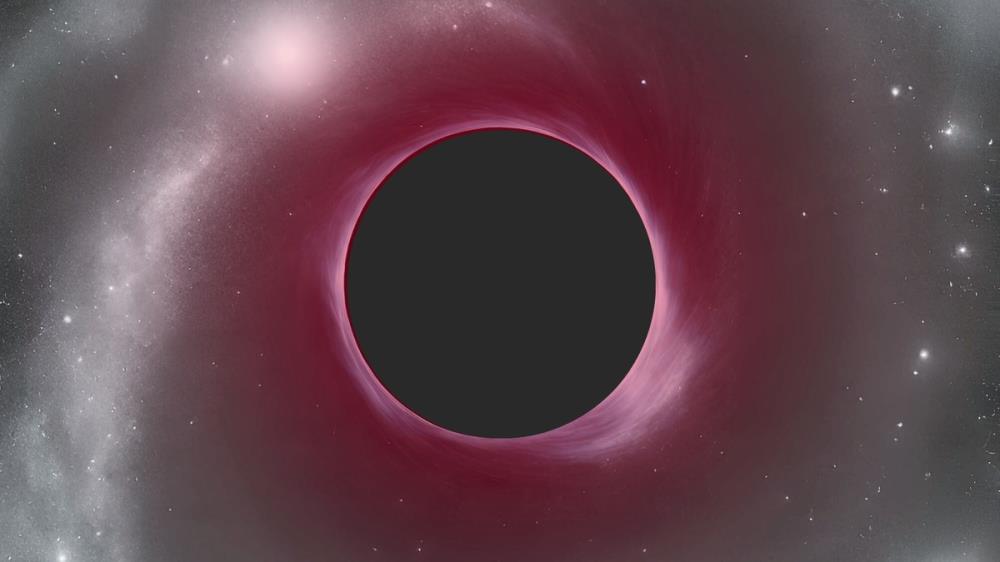

The supermassive black hole is 40 million times as massive as the sun and powers a quasar that existed 700 million years after the Big Bang.

Vision Pro is here and it’s a surprisingly capable device. Apple has also loaded the headset with a ton of options and features that aren’t obvious at first glance.

Unfortunate...I think Uber rushed too fast into the autonomous game without proper testing like what Tesla and Google are doing.

Wonder how long this will take to at least be accepted? I think we are still a ways off from viewing an autonomous vehicle crash as we would one driven by a person. I wouldn't get in a self-driven car, personally -- but I recognise that that's a sign of changing times... I imagine people had the same attitudes about aeroplanes in the early 20th century.

Had to happen. A bug in a videogame may be excusable. Bugs in a 2-ton guided missile are not, yet they will still happen, because programmers are not infallible, and can't account for all possible conditions. Once the world fills up with autonomous machines, it will be interesting to see how laws evolve to deal with it. Is this accident criminal negligence, or is it an empty hole in criminal law? Someone lost her life either way.