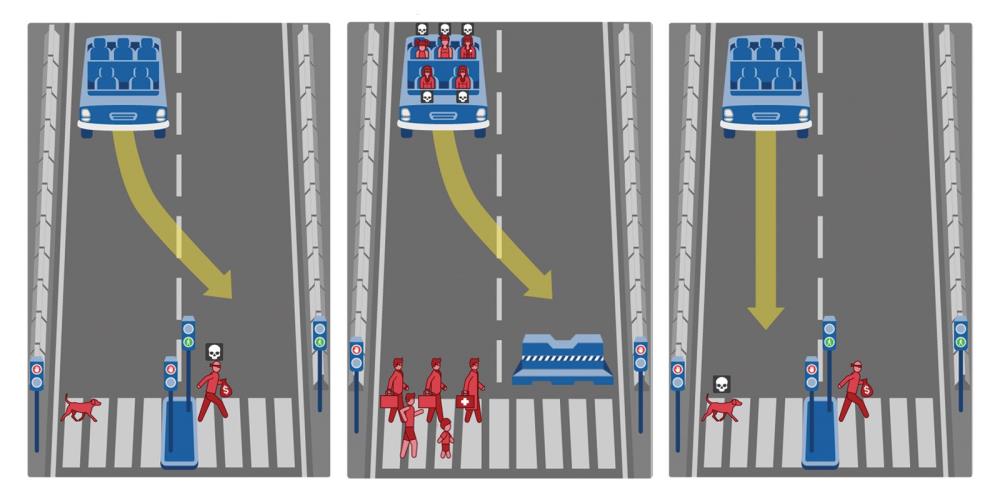

Philosophers have been gnawing on the infamous Trolley Problem for decades, and it’s always been a purely intellectual exercise with no “right” answer. But we’re suddenly in a world in which autonomous machines, including self-driving cars, have to be programmed to deal with Trolley Problem-like emergencies in which lives hang in the balance. There’s no dodging the issue: The programmers have to decide how machines can behave appropriately in crunch time (as it were).

Hours after Uber launched driverless cars in Pittsburgh, aldermen proposed an ordinance to ban driverless cars in Chicago.

The company’s fleet of robotic cars will be supervised by humans in the driver’s seat.

With Google, Uber and Tesla working to get driverless cars on the road, the streets will soon be controlled by computers. But there are important questions.

"When should driverless cars kill their own passengers?"

The very moment the passenger opts for a driverless car of course.

Computers are inherently stupid, don't let them dumb you down by relying on them.

Most of all don't trust them with your life when not 100% necessary.

We are slowly becoming the race of the helpless. Pathetic.